Nota AI Reduces Memory Usage of Upstage's Solar LLM by 72%, Demonstrating Proprietary Quantization Technology

PR Newswire

SEOUL, South Korea, March 5, 2026

New "Nota AI MoE Quantization" approach preserves model performance while significantly improving memory efficiency

SEOUL, South Korea, March 5, 2026 /PRNewswire/ -- Nota AI, an AI optimization technology company behind the Nota AI brand, announced that it has developed a next-generation quantization technology that significantly compresses the size of Solar, a high-performance large language model (LLM) developed by Upstage, while maintaining high accuracy. The breakthrough reduces inference costs and improves processing speed without sacrificing performance.

The development was carried out as part of the "Sovereign AI Foundation Model Project" led by South Korea's Ministry of Science and ICT. By applying Nota AI's lightweighting and optimization technologies to Solar Open 100B, the company significantly improved memory efficiency while preserving model performance. The achievement lowers the memory requirements of the 100B-parameter model while maintaining its capabilities, enabling more practical deployment of Korean AI foundation models in physical AI environments such as mobility and robotics.

The newly developed technology focuses on addressing technical challenges associated with the Mixture of Experts (MoE) architecture, which is rapidly gaining adoption in next-generation LLMs. Conventional quantization methods typically compress the entire model uniformly without considering the distinct characteristics of individual expert models. To overcome this limitation, Nota AI developed a proprietary algorithm optimized for MoE architectures, called "Nota AI MoE Quantization."

The approach is designed to minimize quantization distortion during the inference process of MoE models. Unlike conventional methods that uniformly reduce precision across all operations, Nota AI's algorithm selectively preserves precision in critical components while compressing less sensitive parts of the model. This enables effective model compression while minimizing performance loss.

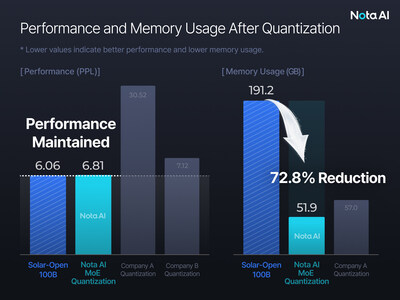

Applying the technology to the Solar 100B model yielded significant improvements compared with conventional quantization methods. Nota AI successfully reduced Solar's memory usage from 191.2GB to 51.9GB, representing a 72.8% reduction. At the same time, the model maintained performance levels comparable to the original version, achieving a Perplexity (PPL) score of 6.81, close to the baseline model's 6.06. In contrast, some generic quantization approaches resulted in performance degradation exceeding fivefold. Nota AI has filed a patent application for the technology to strengthen its intellectual property portfolio.

While conventional quantization techniques often sacrifice model performance to reduce memory usage, Nota AI's technology demonstrates that it is possible to maintain performance while delivering AI services faster and to more users on limited GPU infrastructure. As a result, enterprises can deploy large-scale LLMs more easily on their own devices—models that were previously difficult to implement due to hardware constraints.

The significant reduction in Solar 100B's memory footprint while preserving performance also creates new opportunities for deploying high-performance AI in real-world on-device environments, including robotics and automotive systems. Additionally, the technology enables organizations facing limited access to high-end GPU infrastructure to serve more users on the same hardware, directly contributing to lower operational costs.

"This achievement is meaningful because we were able to apply Nota AI's proprietary quantization technology to Solar 100B, a Korean AI foundation model, significantly reducing memory usage while maintaining performance," said Myungsu Chae, CEO of Nota AI, said, "As demand grows for deploying large-scale models directly on devices, Nota AI's lightweighting and optimization technologies will play a critical role in enabling high-performance AI."

![]() View original content to download multimedia:https://www.prnewswire.com/news-releases/nota-ai-reduces-memory-usage-of-upstages-solar-llm-by-72-demonstrating-proprietary-quantization-technology-302706619.html

View original content to download multimedia:https://www.prnewswire.com/news-releases/nota-ai-reduces-memory-usage-of-upstages-solar-llm-by-72-demonstrating-proprietary-quantization-technology-302706619.html

SOURCE Nota AI